An introduction to Utility AI

When it comes to game AI, making the “right” decision can get complicated fast. It’s easy to say that when a Sim is hungry they should eat, but what if they’re both hungry and bored? Which need should they satisfy first? And considering there are typically multiple ways to satisfy each need, such as making a meal versus ordering pizza, which specific action is the “right” choice here? Or what if all of their needs are reasonably satisfied so they don’t have anything that needs immediate attention? Rather than stand and stare into space, they should still do something.

In a combat encounter, even a modest engagement might leave a unit with several options that all seem decent on the surface. Should a unit run up to an opponent to deal strong melee damage, but risk exposing themselves, or should they hang back in cover and opt a weaker ranged attack? How should their current health and ammo impact this decision?

It’s a lot to figure out and a simple state machine or behavior tree with some if statements really won’t cut it here. The situations your AI can find itself in are just too varied and murky. At best you’ll have something extremely predictable, and therefore both boring and exploitable. At worst your AI just won’t work how you want it to.

This is where Utility AI can be useful.

Utility AI

Utility AI tries to answer to the question “What’s the best action I can take right now?” by allowing for objective comparison between actions that can be as similar or different as the game demands. This objectivity comes from assigning a normalized score in the range 0-1 to each possible action a entity can take in that moment and then selecting the action with the highest score for execution. This score is typically referred to as the action’s “utility”, hence the name for this technique. We use the term utility because how useful that action is will depend on the game state in that moment. A melee attack may always do a lot of damage, but if no enemy is within range to attack, then it has no utility to us because we can’t actually apply that damage to anything. Conversely, if an enemy unit is right next to us, is low on health, and is weak to our attack type, this melee attack is now extremely useful. If another enemy is also within attack range but has more health, our melee attack still has some utility since it can still be used to reduce an enemy’s health, it just won’t have as much as attacking the more vulnerable enemy.

In practice, utility scoring has proven to be an effective and powerful tool for making decisions, regardless of game genre, and while it’s easy to point to combat scenarios as an example of decision making, as those situations tend to involve thinking about optimal strategy, utility scoring also comes up often in other, more peaceful games. Zoo Tycoon 2 used it for deciding both animals and guest actions, changing what actions were considered depending on the current context, and The Sims series uses it, along with some extra design considerations I’ll come back to in a moment, to decide what action a Sim should take when left to their own devices.

It’s also worth noting that Utility AI is not concerned with how to take a given action, only what action it should take, meaning that the concepts behind it are fully compatible with whatever technique you’re using to execute actions in your game, whether that be via state machine or some other mechanism. The AI will simply say “this is the action to take” and then your game code is responsible for parsing that into actionable data however you see fit, making it a highly flexible system. More than that, because we’re not concerned with the how, only the what, this system allows for exposing the associated levers and knobs to designers and other non-technical people involved in the project, allowing for rapid iteration and testing without having to make any modifications to the codebase every time you want to tweak something (though this sometimes may require some custom tooling to really allow for creativity without touching any code).

Scoring actions

Scoring is where all the magic happens in a utility system, and how you decide to convert the varied data of your game state into a normalized number is everything to this process. Unit positions, stat values, items in your vicinity - it can all play a part, and the one of the first steps in building a utility system is to figure out how you’ll access the current game state, typically referred to as the “context”, and turn the relevant data into numbers. This process may vary depending on what the game looks like, but as a general solution, Dave Mark, one of the experts on Utility AI systems design, has discussed the idea of a “clearing house” - a place where you can ask for data from anywhere in the game and receive it back in a normalized state.

If we want to score a healing action, for instance, we might ask the clearing house for the unit’s current health as a percentage of their total health (so we know if we even need to be concerned with healing right now), combine that with information from the current context to figure out how much health we’ll recover from the given action, and then calculate a score based on those factors, since taking some time during a lull in the combat to heal back to max health even if it’s not critical could be worth it to prepare for the next round, while only healing a percent or two of health when we’re about to die may not be worth it compared to trying to defeat whatever’s attacking us or even just retreating to a safer spot.

Break out the TI-83

But how do you decide what score, exactly, to assign to an action when there are multiple things to consider? What makes healing 25% of your health when you have 30% left different from healing 40% of your health when you’ve got half of it left? The key is to score each consideration separately and then multiply all of the scores together to get a final score for that action.

Taking our healing action as an example again, say the need to heal based on the player’s current health gives us a score of 0.5, and the amount we’ll heal from this action gives us a score of 0.8. This results in the calculation 0.5 * 0.8 = 0.4, which will be our final score for this particular healing action. But rather than just throwing arbitrary numbers to get a score, each consideration can, and should, be modeled on a curve.

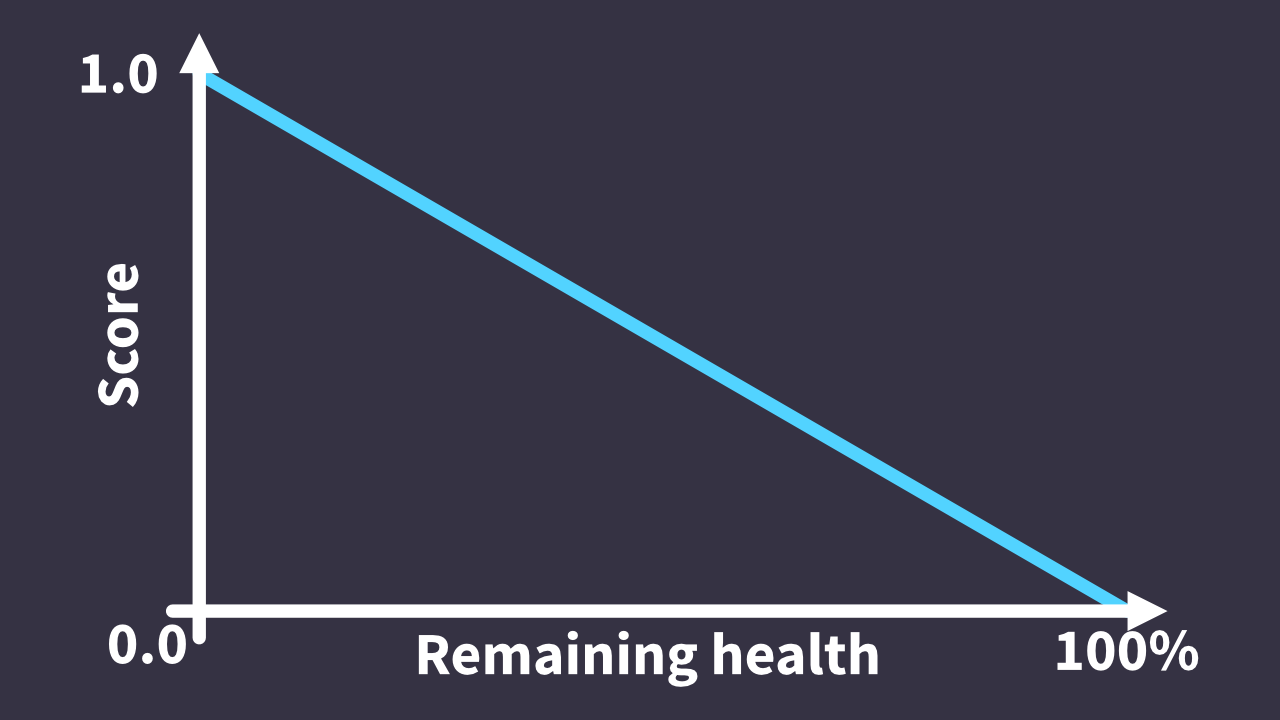

To properly score our healing action, let’s first look the utility of healing based on how low on health we are. We could start out by defining a simple inverse linear curve where we get a score of 0 when at max health and 1 when on the verge of death, meaning we won’t even try to heal when at max health but will almost certainly decide to do so when we’re on our last few hit points. That curve could be defined as utility = -(% of total health) + 1 (This actually means we won’t get a perfect 1.0 score until we’re dead, but anything reasonably high should typically win out).

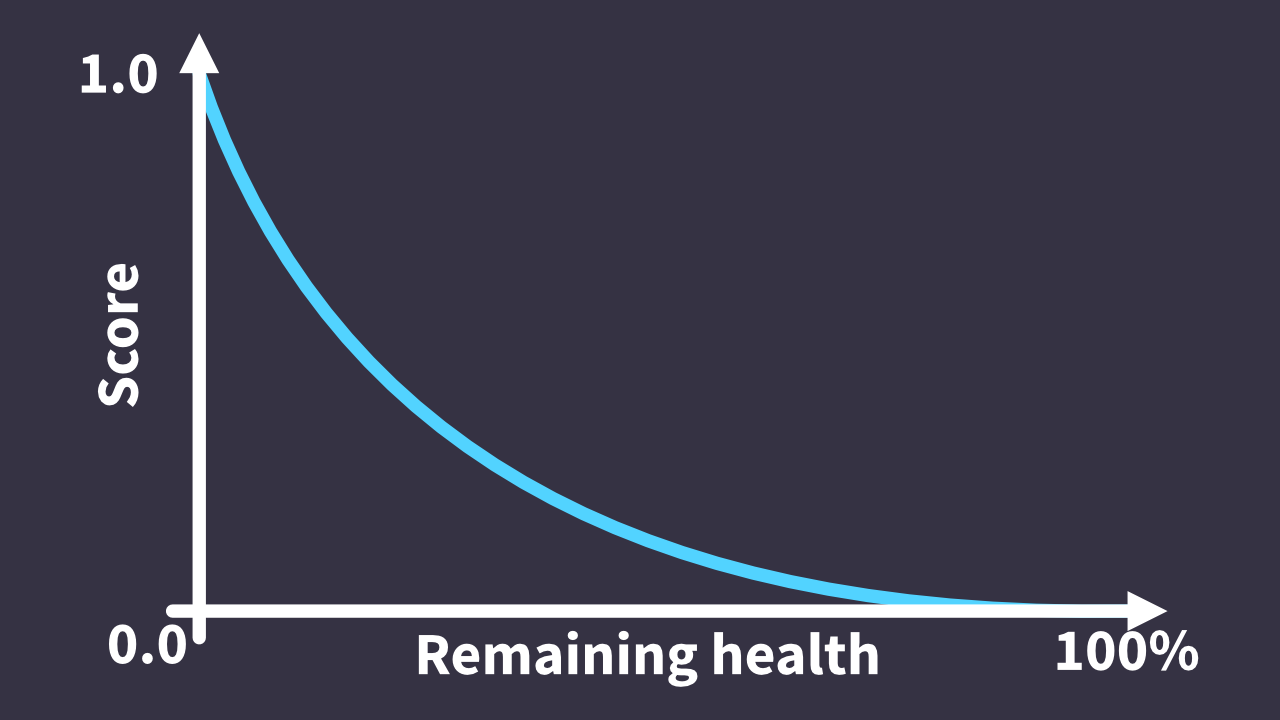

Not bad for a first attempt, as we should see our unit heal when low on health, but it does probably give too much weight to healing when we’re still almost at full health. Instead, we may want a curve that scores healing when at high health lower, but really ramps up things once we get to a critical point. Playing around in desmos, the curve (1 - % of total health) / ((2 * % of total health) + 1) looks closer to what I’d like. Now, when health is relatively high, this consideration will rank healing actions low, only scoring such actions at 0.25 when at half health, but as health decreases further, our score will quickly ramp up, scoring healing actions at 0.5 when at 25% health, and 0.75 when at 10% health. As we playtest our game, we’ll continue to tweak the parameters of the curve further, or even change the type of curve itself, to get the effect we want. After all, what I’ve done here is just a starting to build off of.

For looking at the utility of how much we’ll heal from an action, we’ll, again, plot the percent of our total health we’ll heal on some curve and tweak the parameters accordingly. Want to consider more inputs, such as the action point cost of different actions, cooldown times, etc? Just throw in more curves to score each independent consideration and blend all scores together. Or maybe you have a condition that means, no matter what, do not select this action. In that case, you can score the consideration at 0 and multiplying it with any other considerations, regardless of their scores, will give your final action a score of 0, meaning it should never get chosen.

More techniques

So that’s how we can do some basic action scoring. And while that’s enough to get an AI together than can make some pretty complex decisions, it’s not always quite what we want. Always picking the top scoring action, while effective, may make for an AI that feels too predictable, or maybe just a bit too optimal for player enjoyment.

Randomizing decisions

One easy way to add a bit of variety to the decision making process is to apply a bit of randomness regarding how the decision is made. Rather than always picking the top scoring action, randomly choosing any action within some percentage of the top scoring action can add variety to the AI while still keeping it on the right track. So if we have actions with the following scores:

| Action | Score |

|---|---|

| Melee - Sword | 0.7 |

| Melee - Shield | 0.65 |

| Magic - Fireball | 0.62 |

| Ranged - Bow | 0.4 |

| Defend | 0.2 |

We could say that any action within 0.1 of the top scoring action is an acceptable alternative, resulting in three attacks to choose from and adding a bit of variety to the gameplay.

This is just a simple example of how to add variety, but you can go a lot further with the concept. In The Sims, the amount of randomness varies with how dire the needs of a Sim are. So when a Sim really needs to go to the bathroom, they are likely to pick the most optimal solution to satisfy it, but when in a less critical situation, there can be a lot more randomness as to what action they’ll take next, which is further varied based on the various traits that Sims can have so that mean Sims, as an example, will be more likely to pick a mean social interaction than a friendly one.

Bucketing

But maybe not all types of actions are always equal, or you want to limit the AI’s options to a certain subset of actions in certain contexts. In these cases, we can assign each action to a “bucket”, based on whatever delineation we want, score each bucket and select one the same way we would an individual action, and then only score, and choose from, the actions within the bucket we selected.

The Sims series uses bucketing to help ensure high priority needs, such as a very low stat, are appropriately prioritized, and the Zoo Tycoon series has used a version of bucketing to keep their AI generic while also limiting actions to those that are appropriate for the context, such as when a person is at a show. In fact, in Zoo Tycoon 2 in particular, dying is just a bucket that gets priority over any other buckets so that an animal won’t accidentally “wake up” at some point.

Weights

Sometimes, though, a bucket isn’t the right call as we want our AI to evaluate all options fairly, but also nudge it towards certain types of actions without ruining our existing scoring curve we’ve put together. In these cases, weighting actions based on their category is an option. These weights can be used to multiply final action scores, even going outside of the range 0-1 to keep our priorities straight.

Say we want to have an aggressive enemy that strongly favors attacks over defensive or idle actions unless the situation is really dire. We could assign a weight of two to offensive abilities and a weight of one to all others. When it’s time to score our actions, we’ll multiply the raw action scores by each weight, which will result in doubling the score for attacks and make them that much more likely to be selected.

| Action | Score | Weight | Weighted Score |

|---|---|---|---|

| Attack | 0.6 | 2 | 1.2 |

| Defend | 0.4 | 1 | 0.4 |

| Heal | 0.7 | 1 | 0.7 |

Conclusion

And that’s a look at Utility AI in detail and how different games use it. If you want to learn more, including tips for implementing it in your own game, Game AI Pro and Dave Mark’s GDC talks are both great places to start.